The Biophysics of Speech Production

Speech Is Not Just a Waveform. It Is a System.

Talking feels effortless. But a single spoken sentence requires the precise coordination of over 100 muscles, neural planning, biomechanical forces, and continuous sensory feedback, all synchronized within milliseconds.

When our health changes, this system does not fail quietly. In our previous post, we introduced the concept of direct and indirect pathways linking health conditions to vocal changes. Neurological diseases, cognitive or psychiatric conditions, and structural damage can each perturb speech production in different ways. Some conditions act directly on the machinery that produces sound - for example, affecting muscle control or tissue mechanics. Others influence voice indirectly, by altering cognition, emotion, or autonomic regulation. For instance, a vocal fold lesion can directly disrupt sound production, while slowed speech from cognitive fatigue represents an indirect effect. These disruptions, however, surface as similar, sometimes overlapping, acoustic patterns, making interpretation difficult without understanding where in the system those changes originate.

To use voice as a meaningful health signal, we must treat it as a system and not just an audio waveform.

This article breaks down the biology and physics of speech production, mapping how disruptions at different stages of the system surface in the voice. It also explains why this mechanistic understanding could be useful for building interpretable, clinically useful voice-based health technologies.

The Mechanics of Voice

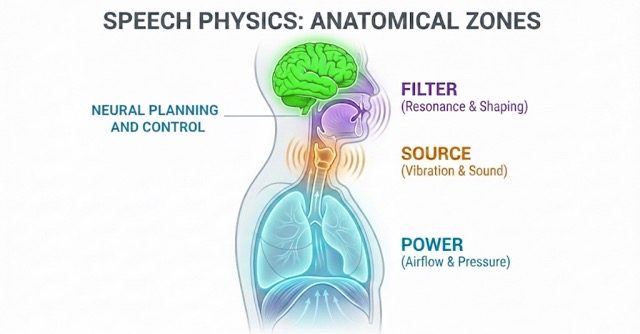

Beyond linguistic meaning, voice is a physical signal whose production relies on three tightly coupled anatomical subsystems: air supply, vibration source, and filtering system.

- Air Supply (Lungs and Diaphragm): The lungs and diaphragm generate and regulate airflow, pushing air upward through the trachea toward the larynx. This airflow provides the power for voice.

- Vibration Source (Vocal Folds): In the larynx, the vocal folds, layered structures of muscle and connective tissue, convert airflow into sound through vibration. The pitch of the resulting sound is primarily determined by vocal fold length and tension, while loudness is driven by subglottal pressure from the lungs. Neural input fine-tunes fold tension, controlling pitch, loudness, and stability. Age, gender, and health conditions can influence vibration patterns, subtly altering the sound.

- Filter (Vocal Tract): The sound from the vocal folds passes through the vocal tract: the throat, mouth, tongue, lips, and soft palate, which acts as a resonator, shaping raw vibrations into distinct speech sounds. Movements of the tongue, lips, jaw, and soft palate adjust resonances, producing the characteristic vowels, consonants, and timbre of speech.

In short: lungs provide power, vocal folds generate sound, and the vocal tract shapes it into speech. Known as the source–filter framework, this model mathematically decomposes speech into an excitation signal (the source) and the acoustic transfer function of the vocal tract (the filter). While this framework is foundational to technologies like articulatory speech synthesis, which digitally reconstructs the human voice by simulating these physical parameters, it also provides a precise roadmap for identifying whether a health condition is disrupting the signal's underlying power source or its resonant articulatory shaping.

Disruption at any stage (airflow, vibration, or filtering) leaves a measurable imprint on the acoustic signal. These changes can be captured broadly through temporal patterns, spectral characteristics, prosody, and motor control markers, allowing researchers to link voice changes to underlying structural, physiological, or neural dysfunction.

Neural Planning and Control

Producing speech is more than moving muscles, as it requires precise neural planning and coordination. Every word we utter begins in the brain, where cognitive and linguistic processes determine what we want to say and how to say it. Several key brain regions coordinate this complex process:

- Prefrontal cortex: organizes and plans speech sequences

- Temporal lobe / Wernicke's area: retrieves word forms and maps them to the correct sound sequences (phonological retrieval)

- Broca's area (left hemisphere): sequences motor commands for articulation

- Primary motor cortex: executes these commands by sending signals via cranial nerves (e.g., Vagus, Hypoglossal, Facial, and Trigeminal nerves) to the lungs, larynx, tongue, lips, jaw, and soft palate.

- Auditory cortex: monitors the sound of your own voice in real time, providing feedback that helps the brain adjust pitch, loudness, and articulation as you speak.

- Limbic and paralimbic regions: regulate the emotional tone of speech, shaping prosody, pitch variation, and expressive timing to convey affect

These neural circuits synchronize timing, force, and precision across the vocal system. Even small disruptions because of neurological disease, cognitive decline, or psychiatric conditions can slow speech, reduce clarity, or alter prosody.

This is where the direct and indirect pathways from our previous post come into play. By understanding these pathways and the underlying neural control, we can begin to see why voice provides a window into both neurological and mental health conditions, and why analyzing subtle acoustic changes can reveal patterns that are not obvious from words alone.

How do health conditions affect our voice?

Human voice reflects both the physical machinery and the neural control behind it. Different conditions disrupt these systems in characteristic ways, leaving measurable signatures in speech. Understanding these patterns helps researchers and clinicians link acoustic changes to underlying physiology and neural function.

Here are three illustrative examples:

1. Major Depressive Disorder (MDD)

MDD affects both neural planning and physiological control. Symptoms such as psychomotor slowing, fatigue, insomnia, and diminished cognitive function can alter speech planning and execution.

Voice manifestations include:

- Slower speech rate and longer pauses

- Reduced pitch and tonal variation (flattened prosody)

- Hesitations or filler words during word retrieval

- Changes in vocal energy or effort

These effects reflect indirect pathways: cognitive slowing, reduced affect, and autonomic changes alter muscle tension and coordination without directly damaging the motor systems that produce voice

They can be captured using broad acoustic categories like timing patterns, prosody, and motor control markers, which allow systems to sense these changes without specifying exact features.

2. Cognitive Decline

Cognitive decline primarily disrupts speech planning and sequencing, often affecting the temporal and frontal lobes, which are critical for word retrieval and sentence construction.

Voice manifestations include:

- Frequent hesitations and filler words ("um," "uh")

- Slower articulation and reduced speech rate

- Simplified or vague word choice

These are largely indirect effects, reflecting planning or memory deficits rather than direct vocal impairment. They are detectable through broad signal characteristics such as temporal structure, articulatory precision, and rhythm patterns.

3. Direct Structural Damage (e.g., Laryngeal Cancer, Vocal Fold Paralysis)

Conditions that physically alter the vocal folds or articulatory system produce direct effects on phonation and articulation. Lesions, tumors, or neuromuscular damage introduce irregularities in vibration and airflow.

Voice manifestations include:

- Reduced ability to sustain phonation

- Acoustic roughness or breathiness caused by asymmetrical vibration

- Reduced loudness or dynamic range, leading to a weak or quiet voice

These effects appear in broad acoustic domains such as spectral balance, voice quality, motor control patterns, and temporal stability, directly reflecting the physical disruption.

Understanding Voice Mechanics For Better System Design

Detecting health conditions from speech is inherently challenging: human voice is a highly variable signal, influenced by unique anatomy, neuromuscular control, and demographics. On top of this, the same condition can manifest differently depending on severity and compensation strategies, while comorbidities often produce overlapping acoustic signatures.

This is where knowing how voice is produced and controlled becomes a strategic advantage. It provides the necessary framework to navigate this complexity.

Understanding which subsystems are driving a signal: the lungs, vocal folds, vocal tract, or neural planning, allows us to design systems that are resilient to these challenges:

- Protocol design: Knowledge of the voice production system allows for the creation of targeted elicitation tasks that "stress test" specific subsystems. For example, instead of relying solely on natural conversation, researchers can use Maximum Phonation Time (MPT) to isolate respiratory support or Diadochokinetic (DDK) tasks to evaluate the motor precision of the articulatory filter. Such design strategies can provide datasets with a higher signal-to-noise ratio by isolating specific subsystems.

- Feature selection: Choosing acoustic measures that reflect the relevant physiological or neural changes. For example, motor disruptions might be tracked through temporal and stability patterns, while cognitive or affective changes appear in timing, rhythm, and prosody.

- Modeling strategy: Aligning the type of signal with the right computational approach. Sequential patterns may suit planning or cognitive disruptions, while spectral changes reflect mechanical or phonatory effects.

- Interpretability: Linking voice signals to biological and neural mechanisms allows clinicians and researchers to understand why a system flagged a patient, increasing trust and enabling actionable insights.

- Deployment design: Differentiating direct and indirect pathways informs use cases such as screening, monitoring, or triage and helps anticipate confounds in real-world settings.

By grounding voice-based systems in physics, biology, and neural control, we ensure that the signals these systems rely on are meaningful, specific, and clinically interpretable, rather than just statistically correlated.

Ultimately, the physics of speech serves as the guardrail that keeps AI grounded in reality. It transforms voice analysis from a 'black box' into a transparent clinical instrument, one that doesn't just predict health outcomes, but explains the physiology behind them.

Glossary of Terms

Biological Mechanisms

Air Supply (Power): The system of lungs and diaphragm that generates and regulates pressurized airflow, providing the energy for voice.

Excitation Signal – The raw sound (source) produced by the vibration of the vocal folds before it is shaped by the vocal tract.

Acoustic Transfer Function – The mathematical description of how the vocal tract filters and shapes the sound source into specific speech sounds.

Auditory Feedback Loop: The process by which the brain (via the auditory cortex) monitors the sound of its own voice in real time to instantly correct pitch, loudness, and articulation.

Direct Pathways: The motor and structural systems that physically execute speech. Disruptions here (e.g., nerve damage, laryngeal lesions) directly impair the "hardware" of voice.

Indirect Pathways: The cognitive, emotional, and autonomic systems that modulate speech. Disruptions here (e.g., depression, cognitive load) alter the "software" of voice, changing rhythm and tone without damaging the physical structures.

Larynx (Vocal Folds): The "voice box" containing the vocal folds. It acts as the Source of sound, where muscle tension and airflow interact to create vibration.

Limbic / Paralimbic Regions: Brain areas responsible for emotional regulation. They infuse speech with "affect," controlling the expressive timing and pitch variation we hear as emotion.

Source–Filter Framework: The foundational model of speech production: the lungs provide power, the vocal folds act as the sound source, and the vocal tract acts as the filter to shape that sound into speech.

Vocal Tract: The series of resonators (throat, mouth, nose) that shape raw sound into intelligible vowels and consonants through the movement of the tongue, lips, and jaw.

Wernicke's Area – A region in the temporal lobe responsible for phonological retrieval—the process of retrieving the correct sound-codes (word forms) to match a speaker's intent.

Acoustic Markers & Features

Acoustic Roughness: A perceptually harsh or "noisy" voice quality, often caused by asymmetrical or irregular vocal fold vibration (common in direct structural damage).

Formants: The resonant frequencies of the vocal tract. Changing the shape of your mouth changes the formants, which allows AI to measure articulatory precision.

Jitter & Shimmer: Measures of "perturbation" or instability. Jitter refers to cycle-to-cycle variation in pitch; Shimmer refers to variation in loudness. High values are often markers of direct motor dysfunction.

Prosody: The "melody" of speech, including rhythm, stress, and intonation. Flattened prosody (monotone speech) is a key indirect marker of conditions like depression.

Psychomotor Slowing: A clinical term for the visible and audible "slowing down" of physical and mental activity, often manifesting as longer pauses and a slower speech rate.

Spectral Characteristics: Features related to the frequency content of the voice (e.g., pitch, timbre, harmonics). These often reflect the mechanical health of the vocal folds.

Temporal Patterns: Timing-based features of speech, such as rate, pause duration, and rhythm, which often reflect the efficiency of neural planning and cognitive health.

Assessment Protocols

Maximum Phonation Time (MPT) – A measure of the longest duration an individual can sustain a vowel sound, used to assess respiratory support (power) and glottal efficiency.

Diadochokinetic (DDK) Rate – A task requiring the rapid repetition of syllables (e.g., "pa-ta-ka") to evaluate the motor coordination and speed of the articulatory "filter."

References

- Simonyan K, Horwitz B. Laryngeal Motor Cortex and Control of Speech in Humans. The Neuroscientist. 2011;17(2):197-208. doi:10.1177/1073858410386727

- Rabiner, L., & Schafer, R. (2010). Theory and applications of digital speech processing. Prentice Hall Press.

- Birkholz, P. (2013). Modeling consonant-vowel coarticulation for articulatory speech synthesis. PloS one, 8(4), e60603.

- Hickok, G., & Poeppel, D. (2007). The cortical organization of speech processing. Nature reviews neuroscience, 8(5), 393-402.

- Schirmer, A., & Kotz, S. A. (2006). Beyond the right hemisphere: brain mechanisms mediating vocal emotional processing. Trends in cognitive sciences, 10(1), 24-30.

- Guenther, F. H. (2016). Neural control of speech. Mit Press.

- A review of depression and suicide risk assessment using speech analysis, Cummins et.al., 2015, Speech Communication

- Forbes-McKay, K. E., & Venneri, A. (2005). Detecting subtle spontaneous language decline in early Alzheimer's disease with a picture description task. Neurological sciences, 26(4), 243-254.

- Walton, C., Conway, E., Blackshaw, H., & Carding, P. (2017). Unilateral vocal fold paralysis: a systematic review of speech-language pathology management. Journal of Voice, 31(4), 509-e7.

- Maslan, J., Leng, X., Rees, C., Blalock, D., & Butler, S. G. (2011). Maximum phonation time in healthy older adults. Journal of voice : official journal of the Voice Foundation, 25(6), 709–713. https://doi.org/10.1016/j.jvoice.2010.10.002

- Ackermann, H., Hertrich, I., & Hehr, T. (1995). Oral diadochokinesis in neurological dysarthrias. Folia phoniatrica et logopaedica, 47(1), 15-23.

Further Reading

- Principles of voice production, Ingo R. Titze; Daniel W. Martin, The Journal of the Acoustical Society of America, 1998.

- Computer-Implemented Articulatory Models for Speech Production: A Review, Bernd J Kröger, 2022, Frontiers in Robotics and AI

- Praat: doing phonetics by computer [Computer program], Paul Boersma, David Weenink, http://www.praat.org/

- Vocal affect expression: a review and a model for future research, Klaus R. Scherer, 1986, Psychological Bulletin.

- Automated assessment of psychiatric disorders using speech: A systematic review, Low et al., 2020, Laryngoscope Investigative Otolaryngology